I’ve been grinding down updates to meta-measured slowly but surely over these past few days. This was started a while back but slowed to a stop when I got distracted by work on meta-selinux. Last week my work to upgrade the SELinux toolstack was merged upstream so I’m re-focusing on getting meta-measured back in shape. This is a quick run down on the changes.

General Maintenance and Release Tracking

I haven’t been chasing upstream much so most of this work has just been maintenance. The kernel in OE has moved forward with 4.1 the new stable in master. The read-only root file system class was broken when I tried a fresh master build the other day. When jumping in to start a refresh, finding core features broken can be a bit discouraging. Instead of taking on this fix immediately I figured it would be best to create a branch to track the latest release (fido) and get that working first. I’ve always avoided tracking OE releases because I don’t want to give the impression that meta-measured is in any way “stable”, as if having my master branch broken on a regular basis wasn’t a good enough indicator.

Seriously though, I kinda dropped the “chasing master” ball. Having a branch that follows the latest release is a good way to make getting everything up to date and it’s a good insurance policy against future breakage in master. So win / win on that. Fido still has a functional readonly-rootfs class so, for the 2 other people out there who have kicked the tires on meta-measured, fall back to a release branch. My goal is to keep at least one release branch in working order.

There are two changes between fido and the current master that have an impact on meta-measured. The first is the upgrade to GCC5. For the trousers and tpm-tools packages this requires a patch that I’ve gratefully borrowed from the hardworking people over at the Debian project. The other is meta-intel nuking the meta-nuc layer. This is a pretty minor change and I’m now using the measured-intel-corei7-64 that extends the intel-corei7-64 machine from meta-intel on the master branch. Fido still has the NUC machine so I’ll stick to that for Fido.

systemd

The first external submission to meta-measured came in a few months back. This was to add systemd support to the trousers package. I had done something similar to this as part of some work to get meta-measured working with the Edison but it was in the BSP layer. It really shouldn’t have been in the BSP layer to begin and my implementation was a hack regardless. The patch I received that adds this feature directly to meta-measured was well done so I’ve added it directly and removed my hack from the BSP.

The only bit I didn’t like from the patch was a utility script that loaded every TPM module under /lib/modules/$(uname -r). This seems a bit heavy handed given that modern Linux kernels autoload these modules when the device is detected. Pretty minor change though and this is now in meta-measured proper.

IMA and TPM2

I started playing around with configuring IMA a while back as a way to exercise the TPM2 that’s baked into the Minnowboard Max as part of PTT. I don’t have any specific plans to do much with IMA just yet but with the xattrs patches I pushed into meta-selinux I’m hoping to be able to support IMA appraisal. It would be a great use case and demonstration to show the utility of my xattr work with an OE image booting with IMA appraisal enforced from firstboot.

UEFI Breakage

I’ve been saving the bad news for last unfortunately. Somewhere in the changes that have been going on with OE, my bringing meta-measured up to date and flashing the firmware on my IVB NUC, measured-image-bootimg broke. I’m pretty sure this is a problem with the latest firmware for the NUC but that’s a guess. Grub2 comes up OK but tboot doesn’t seem to take over.

In prevous debugging I learned that tboot won’t log to VGA since UEFI doesn’t setup a text console for use by whatever executes after ExitBootServices() is called. It’s frame buffer only in UEFI world I guess. But tboot would still log to a serial device through direct I/O. Not this time though. Once Grub2 kicks off tboot my NUC is unresponsive and I get no additional output. Booting straight to the kernel from grub works fine but going the tboot route through multiboot2 isn’t working.

I’m pretty much out of debugging ideas here. My test system is getting a bit old and it’s the only UEFI system I have for use here so I’m writing this off as a loss for the time being. If anyone out there is testing this on a UEFI system I’d love to hear about whatever results you have to report.

Next steps

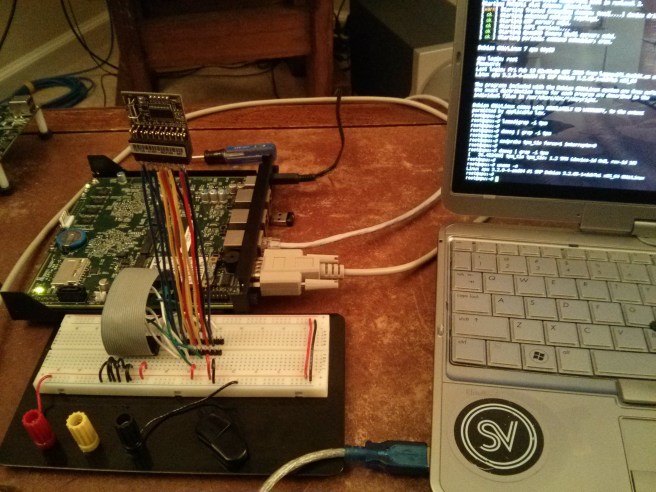

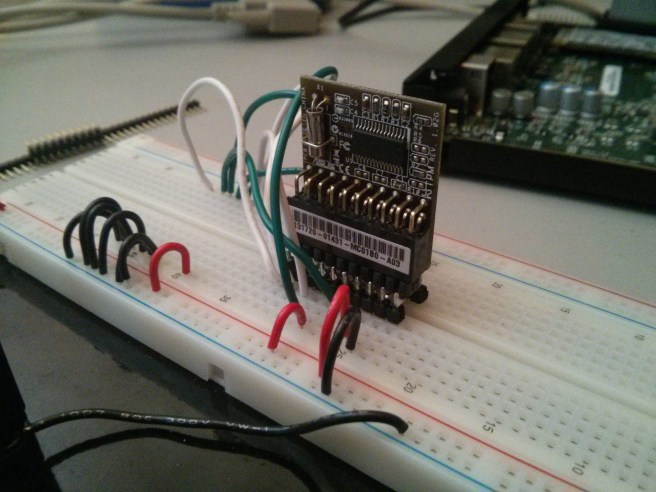

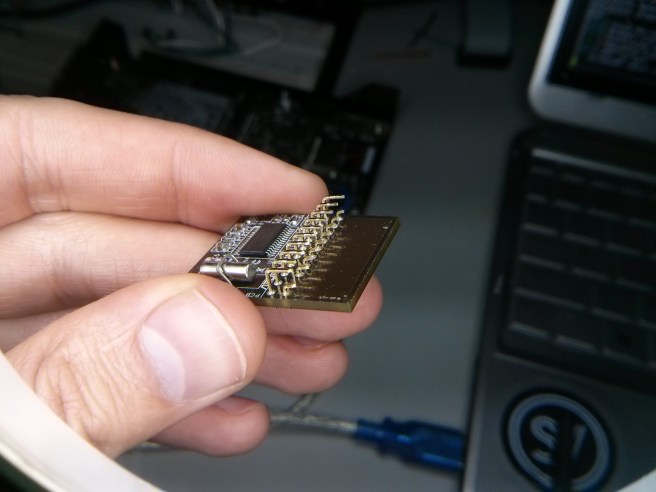

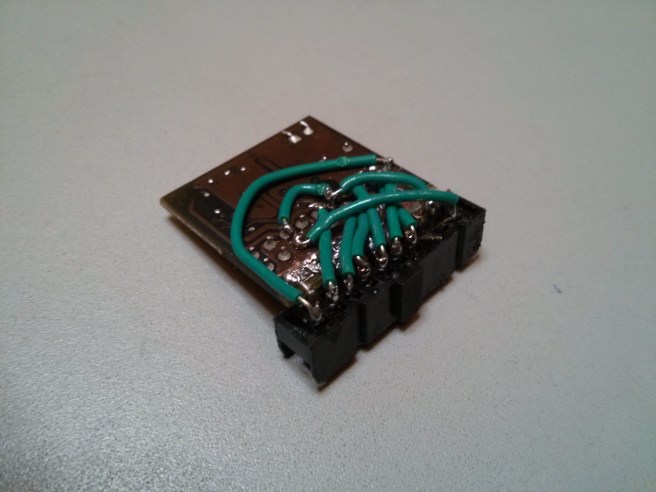

I’ve added the contributed systemd support to trousers but I haven’t enabled or tested systemd support for my reference images. This is next on my list of things to do. I’ve got trousers working on a systemd driven image previously in some work to wire a TPM up to an Edison system (more on this in the future hopefully) but I was working on on the reference Edison images. Stay tuned and hopefully I’ll have proper systemd support in the near future.